Yuki Abe

Ph.D. Student at Hokkaido University

Understanding the Feasibility of Auditory Hand-Steering Guidance for Blind and Low-Vision People

Yuki Abe, Rose Xin Lin, Kotaro Hara, and Daisuke Sakamoto

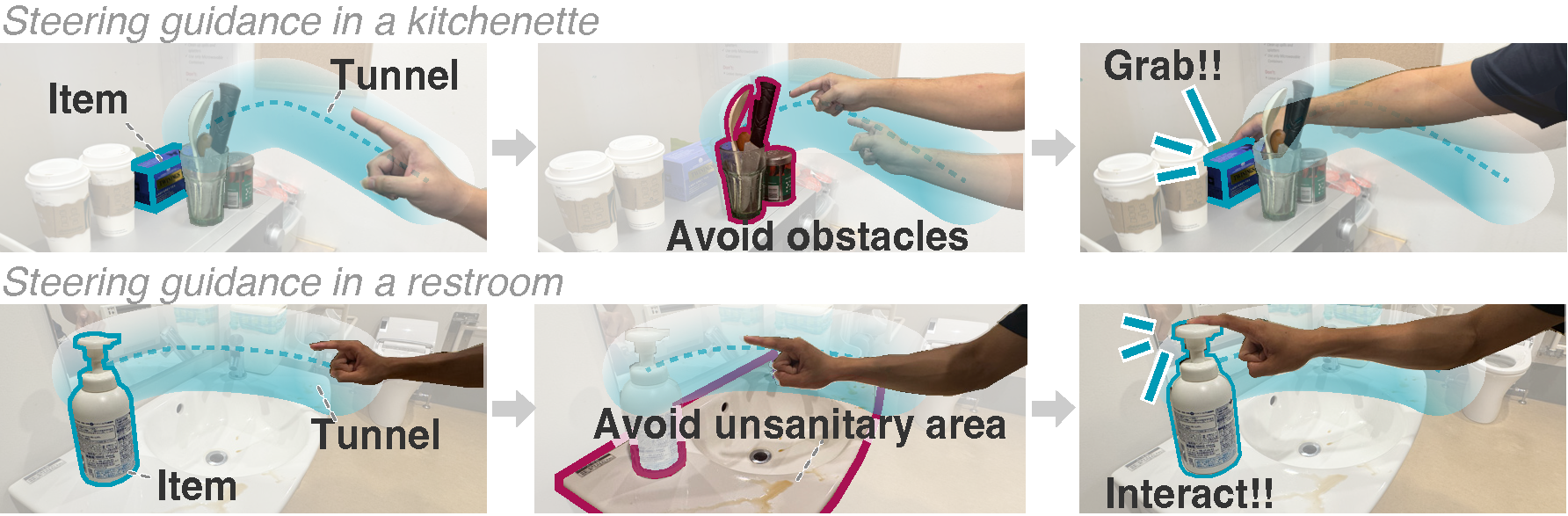

We design, develop, and evaluate two auditory guidance methods to support BLV individuals in performing hand steering. Hand steering refers to navigating one’s hand along a path to a target while maintaining it with in a tunnel. A successful guidance method would allow BLV individuals to perform everyday tasks that involve hand steering, such as preparing tea in an unfamiliar hotel kitchenette while avoiding hazards (top), and using soap in a restroom while avoiding unsanitary areas (bottom). These are situations that our BLV participants identified as potentially useful after experiencing our steering guidance in the user study.

Abstract

Everyday tasks like hand-washing and tea-making require people to steer their hands to use tools, navigating their hands to reach targets while avoiding hazards. Hand-steering becomes challenging when one cannot visually recognize if their hand is approaching the target and is away from hazards. Currently, no practical technological solutions support blind and low-vision (BLV) individuals' hand-steering. We designed and developed two auditory hand steering guidance methods: VERBAL and Follow-Your-Finger (FYF). VERBAL uses spoken directional instructions, while FYF uses sonification to guide hand-steering. We conducted a user study with 12 BLV participants to evaluate the feasibility of the methods in supporting hand-steering. VERBAL lacked precision, 24.6% error rate for one of the easiest conditions, but FYF showed promise, achieving 4.17% error rate for the same condition. Among the six participants who preferred FYF, the error rate was 1.39%. The results demonstrate the feasibility of auditory hand steering guidance for BLV individuals.

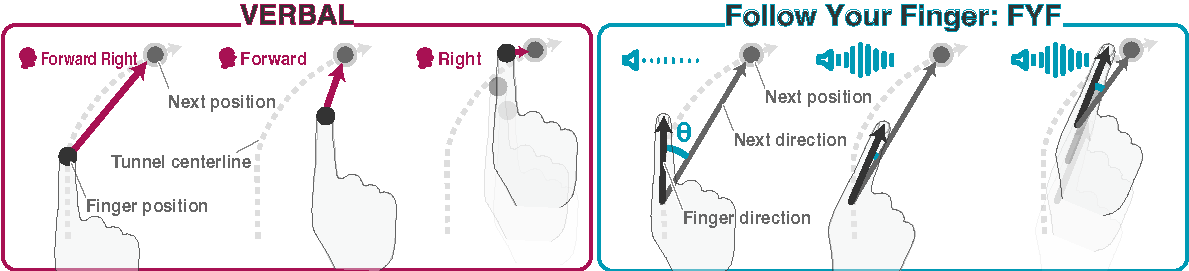

We designed two auditory guidance techniques—VERBAL and Follow Your Finger (FYF)—to support BLV individuals in steering their hand along a mid-air tunnel connecting start and goal points in a 3D space. Both techniques guide the user’s hand while minimizing deviation from the tunnel’s centerline through continuous auditory feedback. VERBAL synthesizes spoken directional instructions based on the direction from the index fingertip to the next position. FYF modulates the volume of a signal tone according to how closely the finger’s direction 𝜃 aligns with the immediate next direction.

Video

10min video presentation

Publication

Yuki Abe, Rose Xin Lin, Kotaro Hara, and Daisuke Sakamoto. Understanding the Feasibility of Auditory Hand-Steering Guidance for Blind and Low-Vision People. In Proceedings of the 2026 CHI Conference on Human Factors in Computing Systems (CHI ’26). ACM, New York, NY, USA, 16 pages. [DOI]

Yuki Abe, Kotaro Hara, Daisuke Sakamoto, and Tetsuo Ono. Exploring Auditory Hand Guidance for Eyes-free 3D Path Tracing. In Proceedings of the Extended Abstracts of the CHI Conference on Human Factors in Computing Systems (CHI EA ’25). ACM, New York, NY, USA, 10 pages. [DOI] [Poster]

Paper

Supplementary Material